A novel compression technique ensuring comparable performance with 70% less parameters Author Amanda Kau ( ORCID : 0009–0004–4949–9284) Introduction The sizes of large language models (LLMs) have been steadily increasing over the last few years.

A novel compression technique ensuring comparable performance with 70% less parameters Author Amanda Kau ( ORCID : 0009–0004–4949–9284) Introduction The sizes of large language models (LLMs) have been steadily increasing over the last few years.

Enhancing Data Interactivity with LLMs and Neo4j Knowledge Graphs Author Wenyi Pi ( ORCID : 0009–0002–2884–2771) Introduction Since OpenAI launched ChatGPT, a large language model (LLM) based chatbot, in 2023, it has set off a technological wave.

Author Dhruv Gupta ( ORCID : 0009–0004–7109–5403) Introduction Large Language Models (LLMs) have become the new face of Natural language processing (NLP). With their generative power and ability to comprehend human language, the human reliance on these models is increasing every day. However, the LLMs have been known to hallucinate and thus produce wrong outputs.

Automated Knowledge Graph Construction with Large Language Models — Part 2 Harvesting the Power and Knowledge of Large Language Models Author Amanda Kau ( ORCID : 0009–0004–4949–9284 ) Introduction Knowledge graphs (KGs) are a structured representation of data in a graphical format, in which entities are represented by nodes and are connected by edges representing relationships

Using Mistral for Data tagging Author · Xuzeng He ( ORCID: 0009–0005–7317–7426) Introduction Data tagging, in simple terms, is the process of assigning labels or tags to your data so that they are easier to retrieve or analyse.

An improvement architecture superior to the Transformer, proposed by Meta Author · Qingqin Fang ( ORCID: 0009–0003–5348–4264) Introduction Recently, researchers from Meta and the University of Southern California have introduced a model called Megalodon. They claim that this model can expand the context window of language models to handle millions of tokens without overwhelming your memory.

Understanding the Evolutionary Journey of LLMs Author Wenyi Pi ( ORCID : 0009–0002–2884–2771) Introduction When we talk about large language models (LLMs), we are actually referring to a type of advanced software that can communicate in a human-like manner. These models have the amazing ability to understand complex contexts and generate content that is coherent and has a human feel.

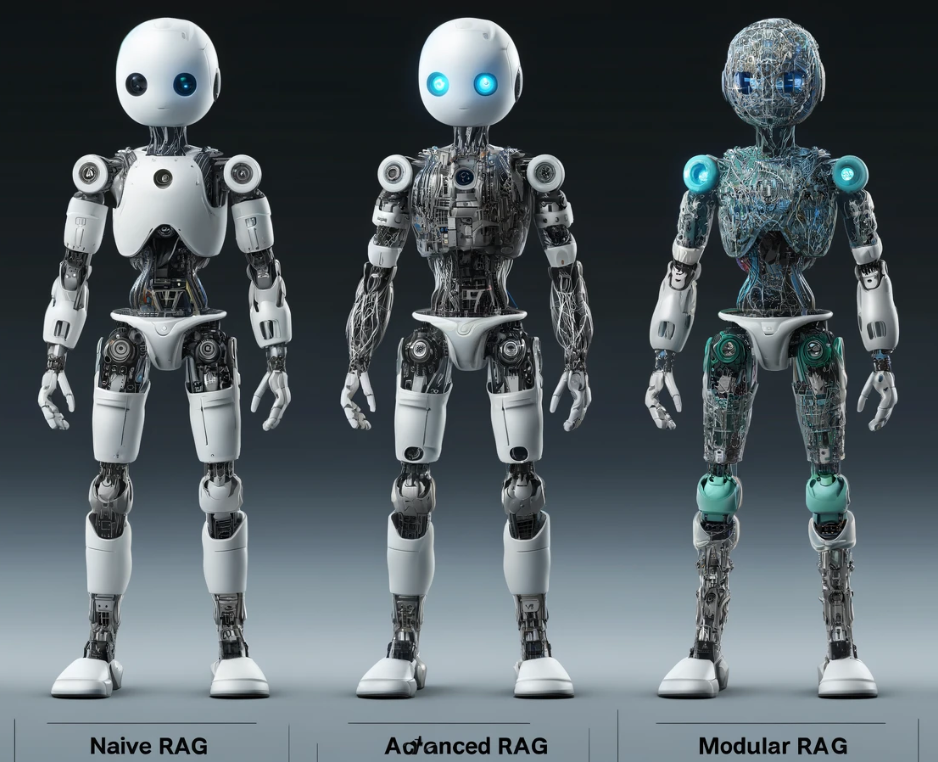

From Naive to Modular: Tracing the Evolution of Retrieval-Augmented Generation Author · Vaibhav Khobragade ( ORCID: 0009–0009–8807–5982) Introduction Large Language Models (LLMs) have achieved remarkable success.

Supervised Fine-tuning, Reinforcement Learning from Human Feedback and the latest SteerLM Author · Xuzeng He ( ORCID: 0009–0005–7317–7426) Introduction Large Language Models (LLMs), usually trained with extensive text data, can demonstrate remarkable capabilities in handling various tasks with state-of-the-art performance. However, people nowadays typically want something more personalised instead of a general solution.

Attention mechanism not getting enough attention Author Dhruv Gupta ( ORCID : 0009–0004–7109–5403) Introduction As discussed in this article, RNNs were incapable of learning long-term dependencies. To solve this issue both LSTMs and GRUs were introduced. However, even though LSTMs and GRUs did a fairly decent job for textual data they did not perform well.